You should create any pipeline in a separate folder and if. ContainerName:, path: employeeinput/workdetail.csv.,Source=Microsoft.DataTransfer. No, you shouldnt change the location of the pipeline.json file to another folder in Git Repo. "Message": "ErrorCode=UserErrorSourceBlobNotExist,'Type=.HybridDeliveryException,Message=The required Blob is missing. Here is the error code for the copy activity:- "copyDuration": 3, I am triggering a Mapping Data Flow inside ForEach activity: Filename. I am trying to read ADLS files in a directory, read the content of the file, do some processing and store the file in adls but the destination file name will depend on one of the column values of input file. The list contains 'files' and 'folders' - the 'folders' in the list is causing an issue in later processing. So, first copy and delete every csv file of source by using debug pipeline with above pipeline structure and for the trigger run change the pipeline structure like below. This can be a time-consuming and error-prone task, especially when dealing with large volumes of data. One of the common tasks in data processing is retrieving the latest file from a set of folders. Now, when I started debugging, I got the error 2200 and it says userBlobDoesNotExists. Passing File names from Foreach to Data Flow - Azure Data Factory. Azure Data Factory Loop through multiple files in ADLS Container & load into one target azure sql table Lookup & ForEach ActivitiesLoop through Multiple in. Azure Data Factory Question 0 Sign in to vote Hi, Using a 'Get Metadata' component I have successfully retrieve a list of 'files and folders' from an on-premise folder. As per your pipeline structure, in next trigger run, it will only copy the triggered csv file after copying and deleting every file from the source in the first trigger run. ForEach gets the file list from the Filter activity and then iterates over the list and passes each file to the Copy activity and Delete activity. Azure Data Factory is a powerful tool for managing and processing data in the cloud.

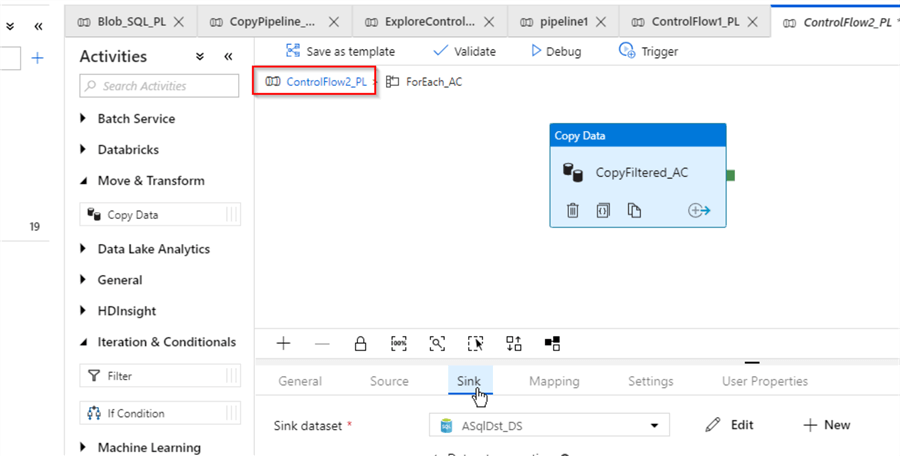

Azure Synapse Sql - Include Subfolders with and. How to copy all files and folders in specific directory using azure data factory. Get MetaData:- For capturing the files (2 csv files) in the input containerįorEach:- For iterating the files in input containerĬopy activity:- Inside the ForEach. After passing the file paths one by one to the foreach activity, the files are processed one by one and stored in their original locations. I want to copy 2 tables from blob storage to SQL Database.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed